Innovation Transforms Quantum Processing of Image-Based Scientific Data

Berkeley Lab Team Introduces a Quantum Pixel Representation Framework that Outperforms Other Quantum Image Representations

June 9, 2022

By Carol Pott

Contact: cscomms@lbl.gov

As the tools for science (such as telescopes, particle accelerators, and gene sequencers) have become more sophisticated and exacting, the data that we are able to gather from them is in danger of overwhelming current statistical and machine learning approaches for analysis and understanding. These tools generate hundreds of petabytes of data that must be processed to extract the findings that scientists seek. To put the scope of the issue in context, just one petabyte is the equivalent of approximately 500 billion pages of printed text, about 20 million tall file cabinets stuffed with paper.

Using current classical algorithms, the analysis of hundreds of petabytes of image-based data is particularly challenging and time-consuming, so scientists are clamoring for more efficient ways to handle and process that complex scientific information. To this end, quantum computing could offer an interesting approach to speed up analysis in a wide variety of fields, including image processing.

A team from Lawrence Berkeley National Laboratory (Berkeley Lab)’s Scientific Data and Applied Mathematics and Computational Research (AMCR) divisions recently published its work in Nature Scientific Reports that highlights their solution: a novel, unified framework called the quantum pixel representation (QPIXL) framework that outperforms other quantum image representations. QPIXL produces circuits that require fewer quantum gates without introducing additional or ancilla qubits. Plus, the state-of-the-art framework includes a compression algorithm that further reduces gate complexity by up to 90% without significantly sacrificing image quality. An implementation of their algorithms is publicly available as part of the Quantum Image Pixel Library (QPIXL++).

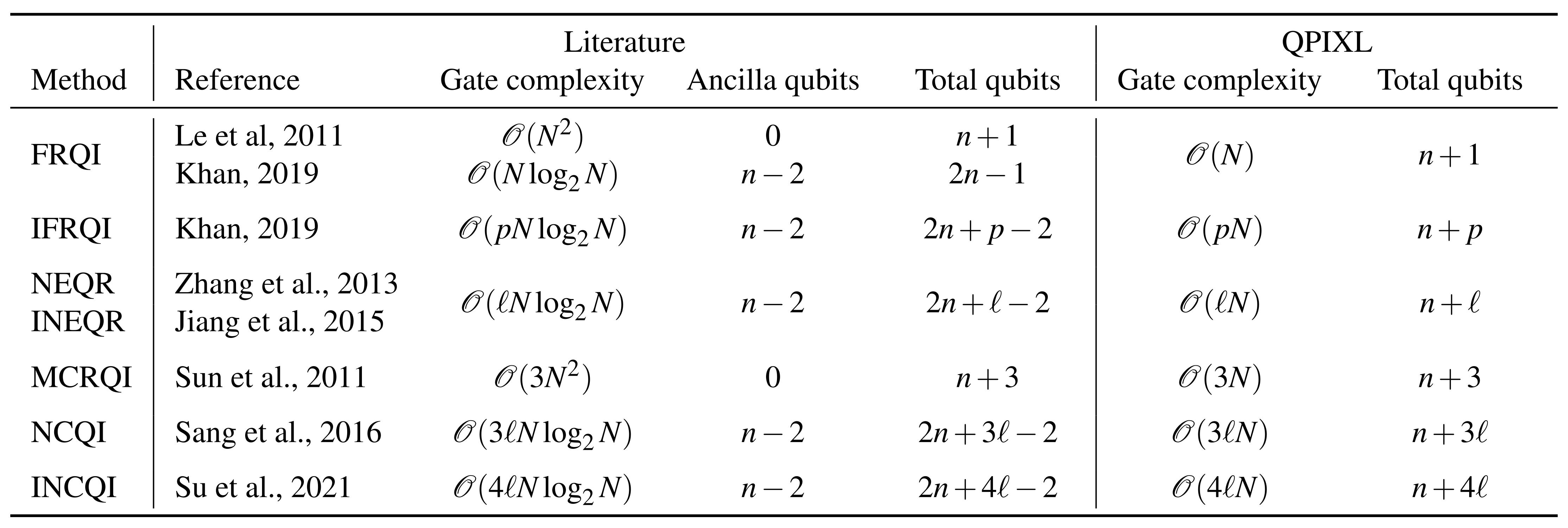

Summary of gate complexities and qubit count for preparing the different QIR states covered in this work and QPIXL for an image with N=2n pixels. For the IFRQI state, the bit depth is given by 2p and for the (I)NEQR, MCRQI, and (I)NCQI states the bit depth is given by ℓ. (Credit: Amankwah, M.G., Camps, D., Bethel, E.W., et al. Quantum pixel representations and compression for N-dimensional images. Sci Rep 12, 7712 (2022). https://doi.org/10.1038/s41598-022-11024-y)

“There are several existing methods for representing images in quantum computers; however, after we studied these methods, we were able to identify important drawbacks that we used as a motivation to create the QPIXL framework,” said Talita Perciano, lead principal investigator on this project. “We realized we could develop an overarching representation that generalizes several of the most well-known existing quantum image representations.”

Then the team noticed they could create simpler circuits that scale linearly with the number of pixels, a quadratic improvement over other approaches. Based on these new circuits, they were able to develop a simple and effective circuit- and image-compression method. They implemented QPIXL using an in-house, C++-based software tool called QCLAB++.

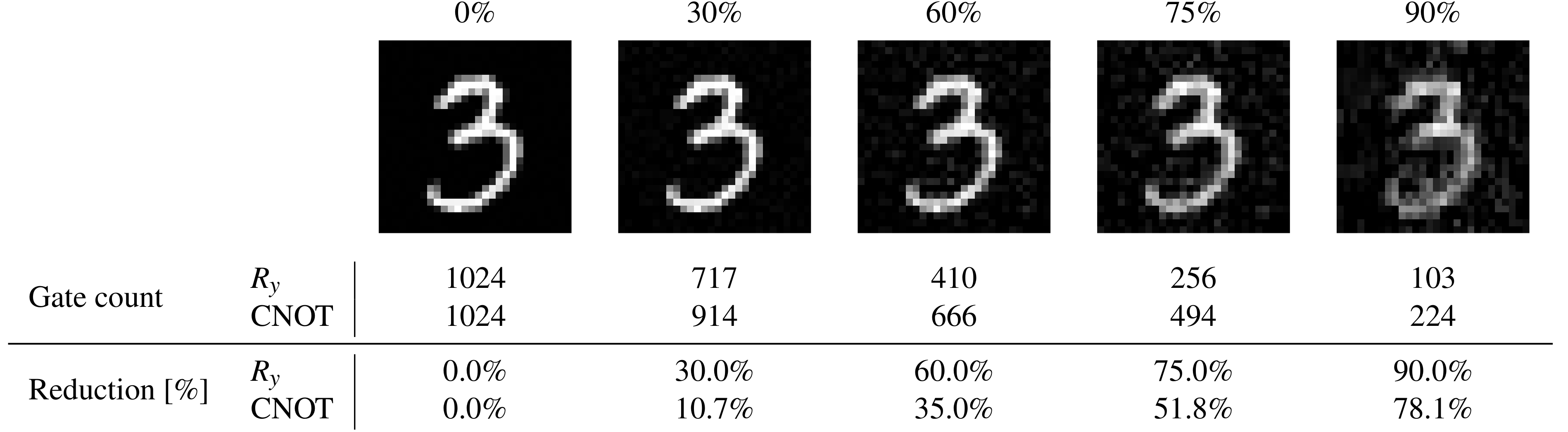

28x28 image data from the MNIST database (a large database of handwritten digits that is commonly used for training image processing systems) simulated with QPIXL++ at various compression levels and corresponding gate counts of the 11-qubit circuit. The final two rows list the reduction in number of gates compared to the uncompressed circuits. This experiment clearly identifies a potential application of our novel quantum image representation method with compression to develop efficient classification algorithms based on machine learning in quantum computers. (Credit: M. Amankwah, D. Camps, E.W. Bethel, R. Van Beeumen, T. Perciano).

“It was timely for us to develop QPIXL, as Roel Van Beeumen and I had just released QCLAB++, a software package for rapid development and prototyping of quantum algorithms,” said Daan Camps, a staff member in the Advanced Technology Group at the National Energy Research Scientific Computing Center (NERSC). “This allowed us to scale QPIXL and simulate pixel data equivalent to a 4K video fragment.”

In the post-Moore era, one of the challenges to making quantum computing a viable platform is to reduce the complexity of quantum circuits to accommodate the limitations of current devices. Current noisy intermediate-scale quantum (NISQ) devices are characterized by low qubit counts and high gate error rates, and they also suffer from short qubit decoherence times. To produce high-fidelity results on a NISQ device, it is necessary to optimize quantum circuits into short-depth circuits. “By leveraging techniques from applied math, we were able to reduce to optimal circuit depth and make QPIXL representation NISQ friendly,” said Beeumen, co-principal investigator on the project and a research scientist in the AMCR division.

Quantum image processing transfers the classical image processing pipeline to the quantum computing framework, and a variety of quantum image representation methods have already become popular due to their flexibility in encoding the positions and colors in an image in a normalized state. In addition, processing operations can be performed simultaneously on all pixels in the image by exploiting what is known as the superposition phenomenon of quantum mechanics. QPIXL produces circuits requiring fewer quantum gates and includes a compression algorithm that further reduces gate complexity without sacrificing image quality.

This work was supported by the Laboratory Directed Research and Development Program of Berkeley Lab, the Department of Energy’s Office of Science Advanced Scientific Computing Research (ASCR) Program, and the Sustainable Research Pathways Program, a partnership between the Sustainable Horizons Institute and Berkeley Lab’s Computing Sciences Area.

About Berkeley Lab

Founded in 1931 on the belief that the biggest scientific challenges are best addressed by teams, Lawrence Berkeley National Laboratory and its scientists have been recognized with 16 Nobel Prizes. Today, Berkeley Lab researchers develop sustainable energy and environmental solutions, create useful new materials, advance the frontiers of computing, and probe the mysteries of life, matter, and the universe. Scientists from around the world rely on the Lab’s facilities for their own discovery science. Berkeley Lab is a multiprogram national laboratory, managed by the University of California for the U.S. Department of Energy’s Office of Science.

DOE’s Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit energy.gov/science.