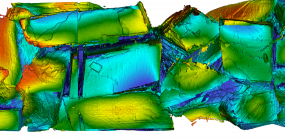

Berkeley Researchers Advance Fluid Flow Modeling for Better Energy Production

July 16, 2025Berkeley researchers have set a new benchmark in fluid flow modeling with their Chombo-Crunch software, capturing unprecedented detail of how fluids move deep underground—a breakthrough that could transform energy production, battery safety, and water use in manufacturing.