The Power of Numerical Analysis in Quantum Chemistry

Berkeley researchers use numerical analysis to illustrate the role of the finite-size error in materials simulation

May 28, 2024

By Kathy Kincade

Contact: cscomms@lbl.gov

Dr. Lin Lin (left), a faculty scientist in the Applied Mathematics and Computational Research division at Berkeley Lab and a Professor of Mathematics at the University of California, Berkeley; and Dr. Xin Xing (right), a former postdoctoral scholar from Lin’s group at UC Berkeley who is now at Apple.

"Numerical analysis is the study of algorithms for the problems of continuous mathematics." (An Applied Mathematician's Apology, p. 1)

Mathematicians like Dr. Lin Lin, a faculty scientist in the Applied Mathematics and Computational Research division at Lawrence Berkeley National Laboratory (Berkeley Lab) and a Professor of Mathematics at the University of California, Berkeley, and Dr. Xin Xing, a former postdoctoral scholar from Lin’s group at UC Berkeley who is now at Apple, have years of experience practicing numerical analysis. They and many of their colleagues are increasingly recognizing the need to convey the underlying concepts and the impact of numerical analysis in ways that fellow domain scientists may find useful.

“Many scientific computing problems are described by continuous mathematics, but to solve them on a computer the problem must be represented using discrete quantities such as a finite number of points. This process can introduce many interesting problems,” Lin said.

For example, quantum mechanics is often used to compute microscopic physical properties of a particular material structure. These calculations are invaluable in material design, as they allow for the prediction of a material’s properties before actual chemical synthesis manufacturing. However, applying these calculations to realistic materials directly can be challenging because the number of atoms is often too big to be computationally. A common workaround is to only model a small portion of the whole system and hope that the computed results are good approximations. This difference between the results computed from a partial system and the exact values for the full system (conceptually of infinite size) is known as the finite-size error.

“Numerical analysis is the key for finding efficient algorithms for such a discretization process, or even for understanding the underlying physics phenomenon associated with the discretization, such as the finite-size error,” Lin said.

Building on previous work exploring finite-size error, in a paper published March 28 in Physical Review X (PRX), Xing and Lin introduce a new approach for understanding the finite-size error in condensed matter systems that uses coupled-cluster (CC) theory, one of the most accurate methods for treating electron correlation in quantum many-body systems. To do this, they developed novel tools in numerical analysis that can mathematically explain the various sources of the finite-size error and provide a rigorous error estimate.

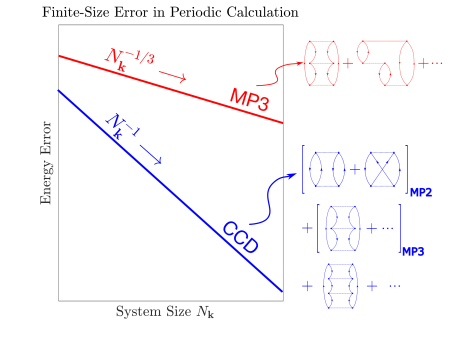

Using coupled cluster doubles (CCD) theory – mathematically the simplest form of CC theory – as an example, they set out to describe and estimate the scaling of the finite-size error with respect to the size of the computational domain. Understanding the finite-size error and its scaling when the system increased in size is important in a variety of practical applications, Xing noted; in material design, for example, reducing the finite-size error means that we can more accurately predict physical properties of a target material.

Understanding the finite-size error and its finite-size scaling is important in a variety of applications. In material design, for example, reducing this error enables more accurate prediction of the physical properties of a target material. (Credit: Xin Xing)

“In quantum chemistry, there are approximate models and theories with different levels of accuracy, and the coupled cluster theory serves as the ‘gold standard’ in molecular quantum chemistry,” Xing said. “One challenge is that for different theories, their finite-size error scaling can be very different. Previously, we’ve analyzed the finite-size error for models of lower accuracy, such as Hartree-Fock and low order many-body perturbation theories, which paves the way to our current work. But the coupled cluster theory is considerably more challenging to analyze.”

Numerical analysis has been fundamental to this approach, which provides the right tool to describe the source of the finite-size error and to provide a rigorous error estimate, Xing emphasized. “It connects the finite-size error in quantum chemistry calculations to a fundamental concept in numerical analysis called quadrature error analysis.”

Quadrature is “a fancy term that says you want to compute the value of an integral and replace it by a finite sum, a process that introduces a quadrature error,” Lin explained. “What was not shown before is that the finite-size error – despite its complex nature in physics – at its core boils down to a quadrature error.”

As a result, this new work implies that many tools in the numerical analysis community may be useful in reducing the finite-size error in quantum sciences. “This is a very exciting interdisciplinary direction,” Xing said.

Solving a Mystery, Filling a Gap

Even though researchers have previously proposed various analytical estimates, rigorous and comprehensive descriptions and estimates of the finite-size error for many quantum chemistry methods are mostly missing. “This new work fills that gap” and solves the mystery of an unexpected inverse volume scaling of this error in CCD calculations, Xing noted. Inverse volume scaling indicates that the finite-size error is inversely proportional to the volume of the size of the simulation cell used to model the system, resulting in much quicker error reduction compared to inverse linear scaling. In inverse linear scaling, the finite-size error decreases proportionally to the size of the simulation cell. For example, if a 3D simulation cell has dimensions of 1 unit length along each axis, doubling the size to 2 unit lengths along each dimension would decrease the finite-size error by a factor of 1/2 in inverse linear scaling. In contrast, inverse volume scaling reduces the error by a factor of 1/8, demonstrating a more rapid decrease.

To resolve this, rigorous mathematical understanding of CCD and its finite-size error are essential. To achieve this, Xing and Lin had to overcome some new mathematical challenges. For example, the CC theory is a very complicated method even for a standalone finite-size system. This makes it technically even more challenging to analyze its finite-size error, which corresponds to analyzing its convergence when applying the CC calculation to many systems of increasing sizes (all the way up to the whole system, which conceptually is characterized as an infinite-sized one). In fact, it already takes some effort to translate the finite-size error problem into a purely numerical analysis problem (the quadrature error).

“The translated numerical quadrature error estimate problem itself is also technically challenging, which is why we developed new tools in numerical analysis to address this problem,” Lin said.

As noted by the PRX reviewer, this method of estimating finite-size error rates settles once and for all how the finite-size error should behave, Lin added. “Practitioners can now apply this inverse volume scaling law with confidence.”

It has other implications for practitioners, method developers, and theorists as well. For practitioners, reducing finite-size errors in quantum chemistry methods using techniques such as power-law extrapolation requires an in-depth understanding of the error scaling. This is particularly important when calculations are constrained to small-sized systems due to the steep increase of the computational cost with respect to the system size and limited resources. For method developers, Xing and Lin have shown how to connect the finite-size error to the mature applied mathematical domain of numerical quadrature calculations, which points to new methods for further finite-size error reduction. Meanwhile, for theorists, some critical questions persist: How can the finite-size error analysis be integrated with the study of other equally important error sources in quantum chemistry calculations? How should the finite-size error behavior in many more complicated systems be analyzed?

“Numerical analysis is a really powerful tool that may sometimes be underappreciated in science,” Lin said. “It can be very compelling for understanding complex physics phenomena, and that is precisely what we are trying to do here. Finite-size error is a piece of complicated physics phenomena that has been bothering scientists for some time, and it is not easy to figure out empirically sometimes and not mention its origin. That is what Xin and I are trying to show in this recent work: that the big piece of the puzzle and the key to unlocking the interpretation and explanation is through numerical analysis.”

This work is supported in part by the U.S. Department of Energy, Office of Science, Office of Advanced Scientific Computing Research and Office of Basic Energy Sciences through the Scientific Discovery through Advanced Computing program; the Applied Mathematics Program of the U.S. Department of Energy Office of Advanced Scientific Computing Research; and the Simons Investigator Award.

About Berkeley Lab

Founded in 1931 on the belief that the biggest scientific challenges are best addressed by teams, Lawrence Berkeley National Laboratory and its scientists have been recognized with 16 Nobel Prizes. Today, Berkeley Lab researchers develop sustainable energy and environmental solutions, create useful new materials, advance the frontiers of computing, and probe the mysteries of life, matter, and the universe. Scientists from around the world rely on the Lab’s facilities for their own discovery science. Berkeley Lab is a multiprogram national laboratory, managed by the University of California for the U.S. Department of Energy’s Office of Science.

DOE’s Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit energy.gov/science.