CRD’s Chuck McParland to Retire after 41 Years of Advancing Scientific Data Collection

June 9, 2014

Contact: Jon Bashor, jbashor@lbl.gov, 510-486-5849

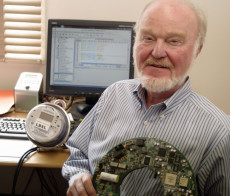

Chuck McParland holding one of the digital optical modules he helped design for the IceCube detector at the South Pole.

Ever since he built a radio telescope in his backyard as a kid in Chicago, Chuck McParland’s interest in science and technology has taken his work to the ends of the Earth and the depths of space. But after four decades and a year at UC Berkeley and then Berkeley Lab, McParland plans to officially retire at the end of June.

Interestingly, one of his early projects, an instrument package he helped build for the ISEE-3 satellite, is back in the news. Launched in 1978, the International Sun-Earth Explorer-3 (ISEE-3) satellite included a package to measure heavy ions emanating from the Sun and passing through the solar system. ISEE-3 was put in an orbit perpendicular to the orbits of the planets. At one point, it was hijacked (with permission!) and sent to rendezvous with a comet, which was successful, but then sent ISEE-3 into deep space. But now it’s heading back to the vicinity of Earth and there is discussion of trying to reconnect with the satellite.

“The satellite is apparently doing well, but the time for the project ran out,” McParland said. “Now there is interest in seeing if the instruments are still working.”

Whatever its operating condition, the satellite presumably still bears the signatures that McParland, Doug Greiner, Fred Bieser and post-doc Hank Crawford scratched into one of the pieces of shielding after they assembled it on the fifth floor of Bldg. 50B.

McParland, who went to Cape Kennedy to watch the satellite launch, was asked to help with the project as he had developed the computational tools to analyze heavy ions at the Bevatron. The heavy ions were generated at the Hilac, then some of the beam was peeled off and injected into the Bevatron. When the beams hit the target, different species of particles were generated and captured by the detector systems. To analyze the data, the researchers had bought a PDP-11 computer with a 16-bit core memory, 8 kilobytes of memory – and no software.

“They asked me, ‘Can you program this?’ and I said ‘Sure’,” McParland recalls. He wrote the program on punch cards and got the results back on rolls of paper tape. As a result, he spent a lot of time running back between the Bevatron and Bldg. 50, up to 30 times a day, debugging the program. After writing the OS, he adapted it and integrated new features, such as recording the output on 9-track tapes for analysis on LBNL’s CDC 7600 computer with results displayed on microfiche.

“I’ve been into electronics all my life and repaired TVs as a kid,” McParland said. “In junior high, I built a radio telescope for a science fair project – built an enormously large antenna in our backyard in Chicago. But I found the Sun!” He would also take the bus to the Illinois Institute of Technology, where he could run his punch-card programs on their computer.

First East, then West

After high school, he moved east to attend Case Western Reserve University in Cleveland; but then realized he had gone in the wrong direction. At that point, McParland decided to change directions and headed west to UC Berkeley, where he majored in physics and philosophy, “which earned me no respect in either department,” he said with a laugh.

After graduating, he joined the Space Sciences Laboratory (SSL), where he worked on an intricate spectrometer, carried aloft on Black Brant sounding rockets, as part of a project to measure heavy ions in the upper atmosphere. These probes were launched from Fort Churchill in northern Manitoba, Canada. After 10 years at the SSL, he joined the lab and began working at the Bevatron.

McParland became head of the Data Acquisition Group in the Nuclear Science Division, working on both the hardware and software, and often getting called out on weekends. His group purchased the second DEC VAX 11/780 computer at the lab, using it for near-real time analysis of data from the Bevalac. At the time, however, there was little in the way of networking. The lab’s backbone consisted of 200 feet of heavy coaxial cable stabled to plywood. “Ports” could be added every five feet using a vampire clamp, which sunk a fang-like tap into the cable. McParland was actually allowed to drill his own tap, making the first network connection to the Bevalac.

McParland also got some unwanted visibility when one of his machines was hacked in 1986, an incident recounted in the 1989 book “The Cuckoo’s Egg” by former lab employee Clifford Stoll. McParland remembers that the attack disrupted weeks of work, causing extensive security changes.

Scrutinizing Element 118 Claims

Another high-profile activity he was involved in was the disproving of a lab scientist’s claim that he had discovered element 118, a “superheavy” element comprising 118 protons and 175 neutrons first announced in a 1999 paper. When the results could not be reproduced, questions arose about the veracity of the claim and McParland was selected to be a member of the lab’s review team. They examined logs from the VAX computers and found “unmistakable snips of edited email to create signatures of something that was never measured,” McParland said. The claim to element 118 was retracted in 2002.

When the Nuclear Science Division decided to get out of the computing business, McParland was brought over to the Information and Computing Services Division (now IT) by then-Division Director Stu Loken, specifically to work on videoconferencing tools. The division had already done extensive work in the field, developing Multicast Backbone technology, “MBone” for short, which had been used to set up a videoconference link with researchers at the South Pole in 1998.

Emergency Equipment for Antarctica

Although that equipment was disassembled, in June 1999 McParland and others used their expertise to research and test computer and imaging equipment to enable remote medical diagnosis and consultation for an emergency airdrop of medical supplies for helping a Dr. Jerri Nielsen, a woman doctor stationed at Antarctica who discovered a lump in her breast. Because of extreme weather conditions at the South Pole, the woman couldn’t be evacuated until October or November. So, the National Science Foundation has organized an emergency airlift of medical supplies and related equipment, which were dropped from an Air Force plane as it flew 200 feet above the ice cap, guided only by burning barrels of jet fuel in the extreme darkness.

On June 29, when the decision to drop medical supplies was made, McParland took the lead in organizing the technology aspects of the situation. “We specified the right equipment for re-establishing the video link and helped track down the best microscope and equipment for the task,” McParland said.

The shipment contained two computer workstations and microscopes equipped with digital imaging cameras, including duplicates of most pieces of equipment so that if one is damaged when the supplies hit the ice at 60 mph, one system could still be used. The equipment allowed doctors in the United States to see and talk with people at the South Pole, helped diagnose the woman’s condition, and transmit medical images and information back and forth – all capabilities developed as part of the Department of Energy’s program to develop new computing technologies.

“It was amazing -- everyone just jumped in with both feet,” McParland said, adding that researchers from NASA, the National Science Foundation and other organizations lent their expertise. “There was a real sense of ‘Do it now! Do it fast!’.”

Designing Deep Detectors

For his next project at the South Pole, McParland was able to take a slower, more deliberate approach. Working first on the AMANDA project, which then led to IceCube, McParland led the team that developed robust, reliable data acquisition software to be installed in detectors buried up to two miles deep in the Antarctic ice. IceCube consists of strings of 4,800 detectors covering a cubic kilometer of ice. The detectors serve as a telescope designed to study high-energy neutrinos.

Because no existing technology could handle the task, McParland’s team designed it from the ground down. Working with engineers at LBNL, they developed the unique computing platform inside the digital optical modules (DOMs) that would enable IceCube to pick out the rare signal of a high-energy neutrino colliding with a molecule of water. A DOM is a pressurized glass sphere the size of a basketball that houses an optical sensor, called a photomultiplier tube, which can detect photons and convert them into electronic signals that scientists can analyze.

Equipped with onboard control, processing and communications hardware and software, and connected in long strings of 60 each via an electrical cable, the DOMs can detect neutrinos with energies ranging from 200 billion to one quadrillion or more electron volts. In January and February 2005, the first IceCube cable, with its 60 DOMs, was lowered into a hole drilled through the Antarctic ice using jets of hot water. Plans called for a total of 80 strings of DOMS to be put in place over the next five years.

While the operating environment offers a unique challenge, McParland said at the time, “The biggest challenge is bridging the gap between the hardware side of the project and the software side.” The hardware developers needed some of the data acquisition software to test their systems, but that was just one aspect of the overall software project. “Getting the testing and the actual data acquisition components to all work together is a challenging job,” he said.

Adding to the difficulty was the push to engineer software that would support the 10- to 15-year lifespan of the experiment. “This means we had to put a lot more effort into the design of the software and use more modern programming practices than are typically used for other experiments of this sort,” McParland said.

Interestingly, when McParland himself made the trip to the South Pole, he saw one of the microscopes he had procured for the emergency medical drop.

Since his work with IceCube, McParland has focused closer to home. He has worked with researchers in the Environmental Energy Technologies Division on technologies for reducing home energy use by using data networks already present within the home to control major electrical loads. He’s also been involved in researching network security issues associated with the recent deployment of Smart Meters by utilities throughout the country.

Several years ago, he and CRD’s Sean Peisert came up with an innovative scheme for improving the security of industrial and Smart Grid data networks by integrating basic physics modeling capabilities into network monitoring frameworks – in particular, LBNL’s Bro networking tool. Hopefully, this research will lead to more robust and secure power grids.

“The lab has always been an amazing place to work,” McParland said, looking back on his time here. “There’s never been a shortage of interesting and challenging projects.”

About Berkeley Lab

Founded in 1931 on the belief that the biggest scientific challenges are best addressed by teams, Lawrence Berkeley National Laboratory and its scientists have been recognized with 16 Nobel Prizes. Today, Berkeley Lab researchers develop sustainable energy and environmental solutions, create useful new materials, advance the frontiers of computing, and probe the mysteries of life, matter, and the universe. Scientists from around the world rely on the Lab’s facilities for their own discovery science. Berkeley Lab is a multiprogram national laboratory, managed by the University of California for the U.S. Department of Energy’s Office of Science.

DOE’s Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit energy.gov/science.